BTW, we're ⚡ hiring Infra, SRE, Web, Mobile, and Data engineers at Doximity (see roles) -- find out more about our technical stack.

At Doximity, we are running more and more of our applications and services on Kubernetes. To help our teams move faster, we’ve built a platform around Kubernetes to get code from developer’s laptops into the cloud quickly and safely.

When deploying to our platform, the first step is to containerize the application. Containerizing an application allows us to deploy and run it in a repeatable and reliable manner by packaging the application with its dependencies. Containerization also includes making configuration choices that enable an application to run better in a container environment, like logging to stdout. The outcome of containerizing an application is an image. Once a team has an image, they can run their application on the platform. But how do they get that image?

Let’s start at the beginning. As more of our development teams moved their applications onto our platform, they needed a way to continuously build and deploy their container images. We removed that burden by building it into our platform. We investigated different approaches to turning application source code into container images. Two of the most popular are Dockerfiles and buildpacks. In this post, I’ll explain the choices we’ve made in our platform to move our development teams from Dockerfiles to buildpacks.

What are Dockerfiles?

A Dockerfile is a text file that contains commands that will be executed by Docker to build a container image. Dockerfiles always start with a FROM directive specifying the base image to start from. Subsequent commands build on top of and modify that base image.

Below is an example. This Dockerfile uses a ruby base image. The bulk of the Dockerfile contains commands to add libraries and packages needed to build or run the application. If you know about BuildKit, you can configure these commands to optimize for cache utilization and other features. The last part of a Dockerfile is what runs by default when we launch the image.

FROM ruby:2.6.6-alpine3.12

# Install tools

RUN apk add --no-cache --update

tool=v1.2.3 \

more-tools=v3.4.5 \

extra-tools=v6.7.8

# Setup non-root user

RUN addgroup -g 1000 -S app && adduser -u 1000 -S -D -G app app

# Create some folders, install bundler, configure bundler, run bundle install, move some files around, set some environment variables, configure yarn, configure registry access, …

# Example of a cache-optimized command

RUN --mount=type=cache,uid=1000,gid=1000,target=/app/.cache/yarn \

--mount=type=cache,uid=1000,gid=1000,target=/app/.cache/node_modules \

--mount=type=cache,uid=1000,gid=1000,target=/app/tmp/cache \

yarn install --check-files --non-interactive --production --modules-folder /app/.cache/node_modules --verbose && \

cp -ar /app/.cache/node_modules node_modules && \

SECRET_KEY_BASE=some-secret-key-base bundle exec rails assets:precompile

ENV PORT 8080

EXPOSE 8080

CMD ["bundle", "exec", "rails", "server"]

What are Buildpacks?

If you’ve ever used Heroku or Cloud Foundry, then you’ve already used buildpacks.

A buildpack is a program that turns source code into a runnable container image. Usually, buildpacks encapsulate a single language ecosystem toolchain. There are buildpacks for Ruby, Go, NodeJs, Java, Python, and more.

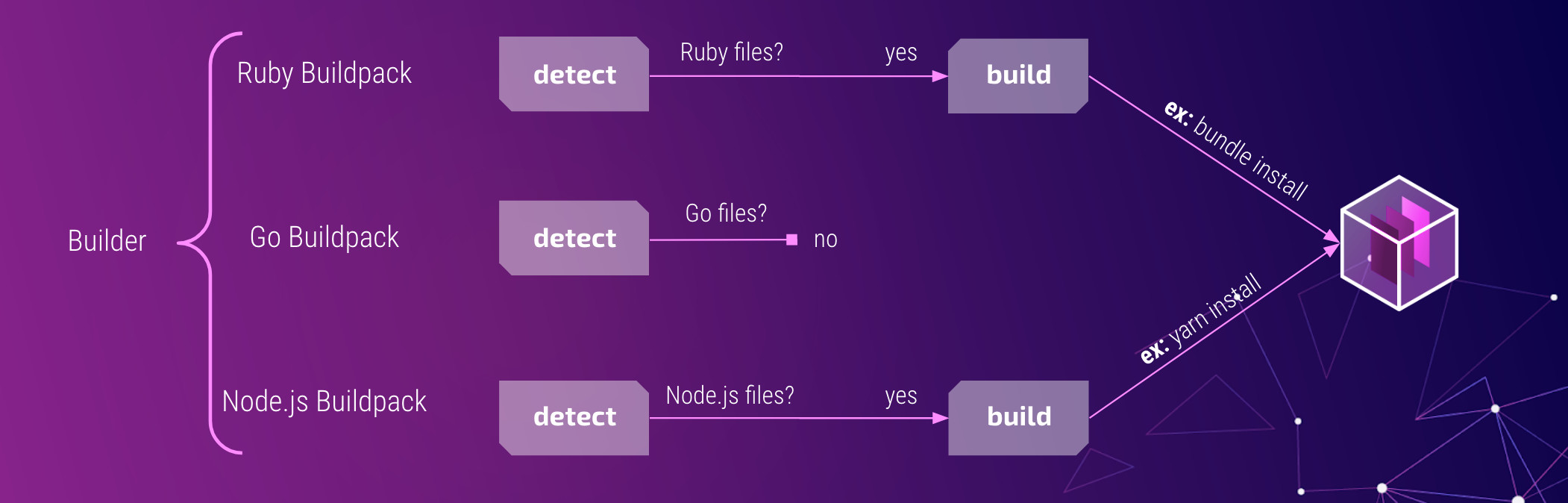

These buildpacks can be grouped together into collections called a builder. In a builder, each buildpack will inspect the application source code and detect if it should participate in the build process. For example, a Go buildpack would look for files ending in *.go, while a Ruby buildpack would look for *.rb files. Once a buildpack (or set of buildpacks) has matched, it moves on to the build step. That’s when the buildpack does its job. It might add a layer to the container image that contains a dependency, like the Go distribution, or it runs a command, like go build.

There are a few places to find buildpacks these days. Heroku, the originator of the buildpack idea, has their own repository. Recently, Google has started integrating buildpacks into many of their cloud products. We chose to use Paketo buildpacks for our platform for a few reasons. The Paketo project is open source, backed by the Cloud Foundry foundation, and has a full-time core development team that is sponsored by VMware. They’ve chosen to create many small, modular buildpacks that can be mixed, matched, and extended to serve a lot of different use cases. Underneath the covers, the Paketo buildpacks implement the Cloud Native Buildpacks specification, meaning that we can write our own or find buildpacks from different projects that might fit other needs. They’re also really focused on building out a broad community around buildpacks. In fact, we’ve already contributed our first buildpack to the Ruby ecosystem!

Paketo has their own set of reference builders (tiny, base, or full) that include support for Java, .NET Core, NodeJS, Go, PHP, Ruby, and static file deployments using NGINX or Apache HTTPD. For our platform, we wanted to tailor the set of available buildpacks to those we are using to build our applications. To do this, we created a custom builder. Our builder includes the Go and Ruby Paketo buildpacks.

Besides buildpacks, the builder also references another container image called a stack. You can think of a stack like you would the base image defined by the FROM directive in a Dockerfile. It's a starter image that the buildpacks can build on top of. A stack is composed of a build-time image and a run-time image. The former is used when the buildpacks are analyzing the application source code and building the application’s container image. The latter is used as the base of the final application image. Just like the builder, a stack can be modified, and we’ve created our own based on the Paketo full stack with a few modifications. Now that we’d put the buildpacks that our development teams use in a builder that runs on top of a stack customized for their needs, we were ready to start building applications.

Let’s take a step back though and spend a little bit of time explaining why we chose to integrate buildpacks into our platform.

Decision Factors

Developer Productivity

In making this decision, our primary concern was developer productivity. We wanted to onboard development teams to the new platform with the least amount of friction.

Our first approach was a simple one. We asked development teams to include a Dockerfile in their source code. The platform would use that Dockerfile to build their application container image before it was deployed. After a few of the teams completed their onboarding, we discovered a few issues with this approach. Familiarity with Docker and Dockerfile syntax is not universal. Even those with experience may not be equipped to write a Dockerfile that can quickly build (and rebuild) a small and secure image. It’s the type of knowledge that tends to get “cargo-culted” around a development organization.

It was clear that our development teams wanted something that “just worked,” and it was costly for them to develop and optimize their own Dockerfiles from scratch. This is where buildpacks really made the most sense. With buildpacks, our development teams didn’t need to write, really, anything. They didn’t need to worry about the size and security of their application image. And they didn’t need to worry about optimizing the build and rebuild times. The buildpacks can make those decisions for them. And we can rely upon an open-source project and its community to contribute to making those buildpacks work well.

While some folks might find using buildpacks to be restrictive compared to the complete freedom of Dockerfiles, most of our developers saw that freedom as a burden. They didn’t want to keep on top of language runtime vulnerabilities or figure out the best way to keep the image small without losing build time speed. As platform operators, choosing buildpacks meant big efficiencies for us as well. If we identified a dependency that required updating, we didn’t need to make pull requests to a dozen repositories to update a dozen Dockerfiles written a dozen different ways. Instead, we bumped the buildpack or stack to a newer version and rolled out the change with our builder.

By moving to buildpacks, we had removed a lot of onboarding friction for teams looking to move their applications to our platform.

Security

Now that we had a few teams running their apps on our new platform, we wanted to ensure they were doing that securely. Many modern applications include dozens, if not thousands, of dependencies that perform many standard plumbing and infrastructural concerns. Our applications are no different. Keeping on top of the new features, bug fixes, and vulnerability alerts for all of these dependencies is a full-time job. We wanted a solution that could take some of that burden off of our development teams.

Again, this is where buildpacks shine. The Paketo buildpacks are continuously updating upstream languages, runtimes, and frameworks in response to vulnerabilities, bug fixes, and new feature updates. Their core development team has built automated tooling that monitors those dependencies looking for new releases and updating them when needed. For a buildpack, like Ruby, the team has automation that polls the release sites for the Ruby runtime, ensuring it executes well against the stack, and updating the buildpack with automated pull requests. In many cases, these new dependencies can be available for our application developers to use almost as soon as they are released.

There are other choices that the Cloud Native Buildpack project has made that just make buildpacks more secure by default. The buildpacks execute as a dedicated non-root user when building and running applications on top of the stack. Each stack image has detailed metadata describing the image’s components, such as the base operating system and packages. Each stack has separate images for building and running applications. The packages on the runtime image are curated to exclude compilers and other tools that might pose security risks. You can learn more by checking out the Paketo stacks repository.

Performance

Once our development teams had started deploying their apps onto our platform, we wanted to make sure they could keep doing that quickly. Writing a Dockerfile that ensures that rebuilt images do so quickly and efficiently might as well be a “dark art.” Not only do you need to know how to get a dependency into the image, you need to know how to keep it cached in case you need it on a subsequent rebuild, and you need to know when to throw that cache away. Multiply that knowledge across the dozens of dependencies we commonly see in our apps. It’s not hard to see why our development teams would struggle to keep up their deployment velocity.

Buildpacks encode this knowledge into their implementation. When an application’s Gemfile.lock changes, the Ruby buildpack knows that it needs to rebuild the layer that contains all of the application’s Ruby gems. It also knows how to do that in a way that keeps the gems that didn’t change. This means that builds happen quickly and efficiently. We aren’t wasting developer time waiting for the same dependencies to be downloaded and installed over and over again.

There are also performance benefits that we like as platform operators. Buildpacks can create layers that can be shared between applications. For example, if all of our applications are using the same version of Go, there is only one layer with that version of Go created in our image registry. On a Kubernetes node, there’s only one copy of that layer that can be read by all of the container instances running on that node. If another application that already uses that layer is running on that node, we don’t need to fetch that layer. All of this means we can be efficient in the space our registry consumes, reduce the disk usage of applications on a Kubernetes node, and reduce deploy times.

Customizability

Many of the comparisons between buildpacks and Dockerfiles can feel like they boil down to “convention versus configuration”. It's a matter of building with Lego blocks instead of Play-Doh. Both ideas have their place. When choosing a project that prefers convention, we wanted to make sure we weren’t prevented from making everything configurable. The Paketo core development team has made the right kind of tradeoffs, creating buildpacks that are easily composable, configurable, and replaceable.

Technically, this means that the buildpack our development teams use to build Ruby applications is really composed of many smaller buildpacks. These buildpacks handle discrete parts of the build process, like installing Bundler, compiling static assets with the Rails Asset Pipeline, or declaring the command the application should use to run. Each of these pieces fit together, providing and requiring pieces of the build environment. Customizing the larger Ruby experience for our development teams can mean adding, removing, or reorganizing these pieces to fit better to their needs.

Today, we use a custom builder that includes the buildpacks that our development teams care about. We’ve chosen those pieces that work for them, and as we learn more about their specific needs, we’ll continue to customize and streamline that set of buildpacks to make our image-building experience more useful, efficient, and secure.

Community

Beyond just using the buildpacks provided by the Paketo project, we’ve also started to build our own. Let me tell you a bit about our first custom buildpack and our experience working with the Paketo core team to contribute it upstream.

The Paketo project, while still a relatively new community, is growing and our experiences collaborating with the core team have been very productive. When we first started exploring using buildpacks to build our Ruby applications, we found that the buildpack was missing a feature that we needed. We weren’t the only ones. There was already an open GitHub issue that described the problem. We needed the Ruby buildpack to do asset precompilation as part of the build process. After some guidance from the Ruby buildpack maintainers (thank you Sophie Wigmore & Ryan Moran), we decided to contribute to the Paketo project by creating the rails-assets buildpack!

We appreciate being able to easily contribute to and collaborate with the Paketo project. They enabled us to contribute a new feature without the responsibility of continuing to build, maintain, and regularly release new versions.

Kubernetes Support

Beyond the buildpacks themselves, the Cloud Native Buildpacks project has enabled the community to develop a Kubernetes-native container-building service called kpack. The service provides declarative Kubernetes resource definitions for mapping source code to buildpacks, and then on to images that can run in our platform. It constantly monitors the application source code, buildpacks, and stack, rebuilding our images when there are changes. As a platform team, having a platform that is as easy to maintain and upgrade as the applications running on it is key to our success in keeping it running.

Summary

At Doximity, we aim to build great products for our clinicians. Internally, we are building a platform to help our teams deliver those products more efficiently. In the process, we’ve thought a lot about how to navigate the container image-building ecosystem. We decided to prioritize a buildpacks-based image building workflow over a Dockerfile one. I hope this helps as you make decisions about your own platform.

Be sure to follow @doximity_tech if you'd like to be notified about new blog posts.